DESIGN

How did the game design come together?

Designing a New Solution

The Problems:

Based on my research, I found that existing language mobile apps, like Duolingo, don’t help people achieve fluency because they do not offer adequate practice in reading, speaking, or listening. Also, the level of autonomy for users to learn what they want is low.

Existing AI chatbot applications for language learning are not beginner-friendly and leave beginner users without adequate help or feedback during conversations.

Goals for a New Concept

-

Goal 1

Give beginner learners prompts for speaking in their target language to build confidence.

-

Goal 2

Give users the freedom of choice in what context or scenario they want to learn in.

-

Goal 3

Give users the ability to learn through speaking, reading, and listening.

Process

Process

The process followed was a variation of the Design Thinking process:

Understand, Define, Ideation, Design, Test, and Refine

Ideation

I initially started by trying to make a better AI chatbot, but I later pivoted to designing a choose-your-own-adventure game.

This pivot came during my sketching process when adding answer choices to a chatbot-style screen reminded me of choose-your-own-adventure books that I used to read and games that I used to play.

I thought that this concept would interest users and keep their attention long enough for them to get the adequate practice they need to become fluent.

The images in this section are some of my inspiration, as well as process documentation for ideation.

Wireframes

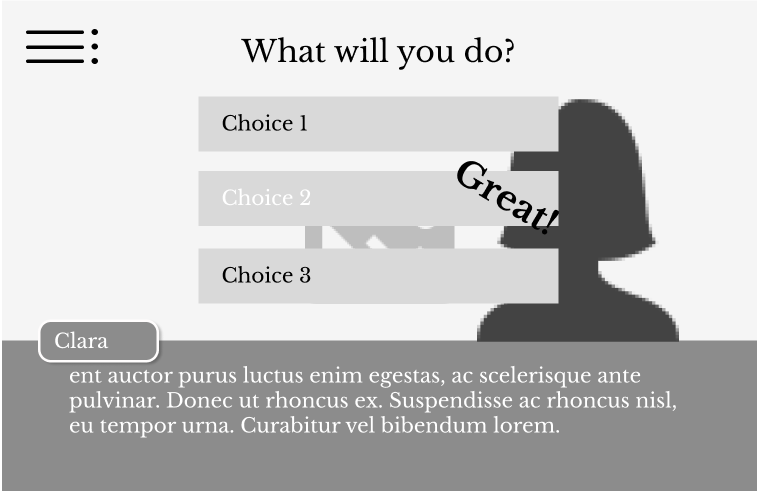

Designing in Figma, my initial wireframes consisted of the basic gameplay.

To stay with the goals of allowing user input, output, and feedback, the user would have the option to listen to the options provided and then be expected to speak their choice out loud before they could move forward. The game would provide some form of feedback for the pronunciations so that the user could learn.

Design

Following the initial wireframes, the design process started by creating the game in three parts: introduction screens, tutorial screens, and gameplay screens.

My idea was that if this game were AI-generated, then I wouldn’t have to create every game scene and answer choices manually, but for the design process, that is what had to be done.

The images below outline the original user flow that was created for the game.

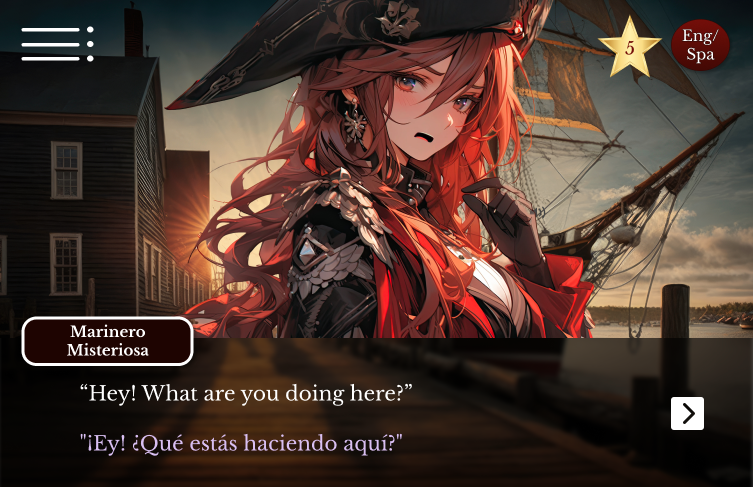

This home screen is inspired by online visual novels. The all images, as well as the name of the game, came from ChatGPT-4 and Dall-E.

User name screen

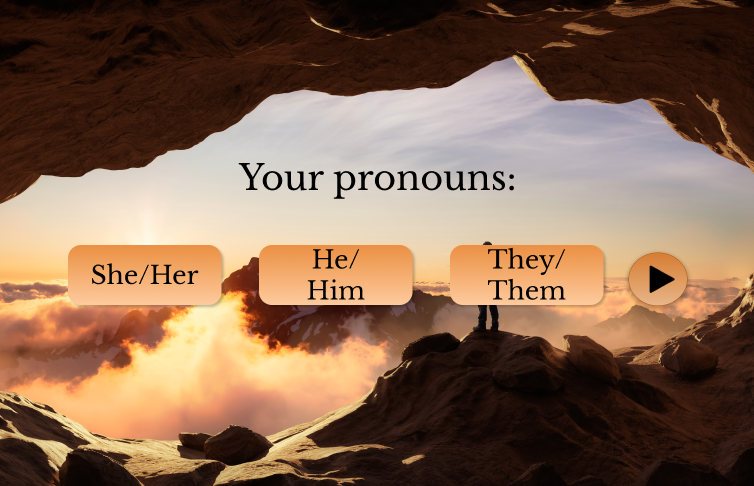

User pronouns screen. This is to capture how the user would like to be addressed.

Choose your language

Choose your own adventure screen. The original design had this screen to try and create guardrails for the AI application of the game.

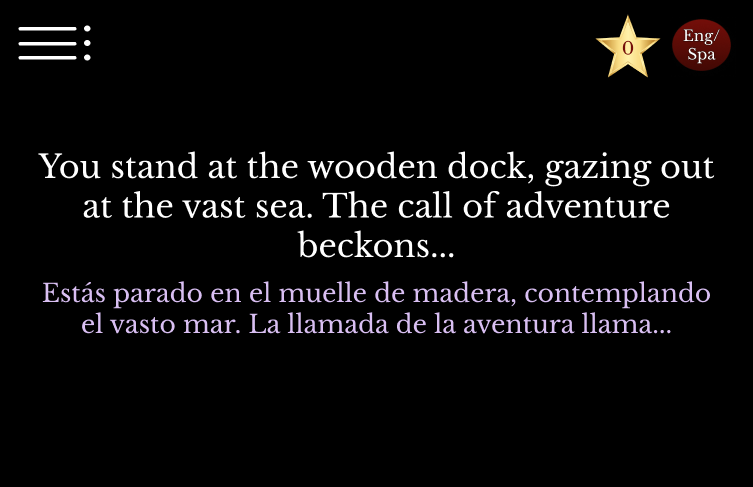

Game starting screen. The intention of this screen was to set the context of the story for the user.

Quick tutorial to ground the user.

Tutorial part 2

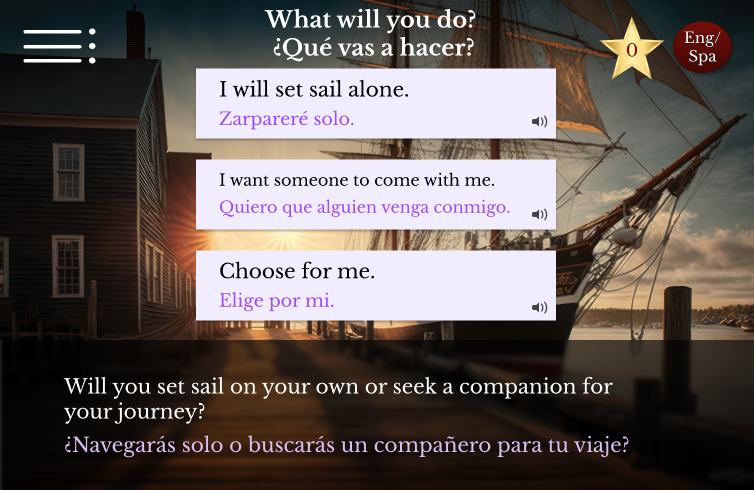

The user is then approached with their first choice to make.

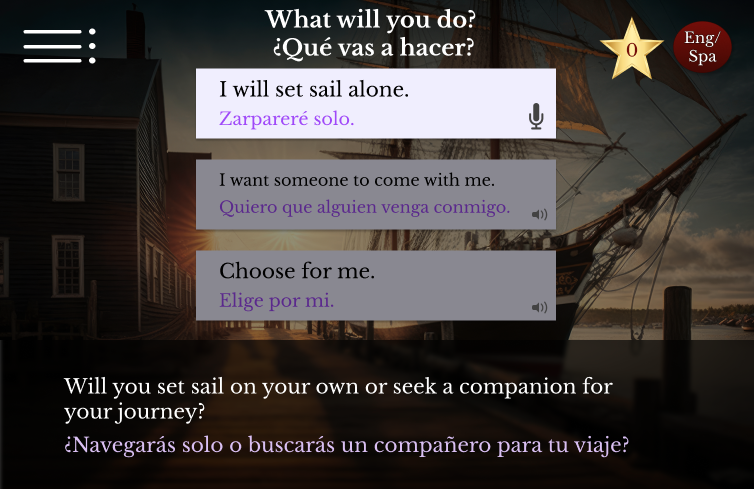

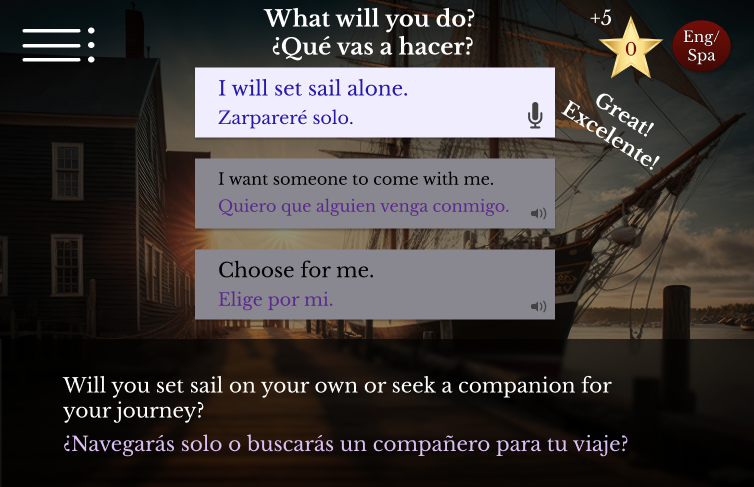

Once the user selects a choice, they are expected to speak the words in Spanish out loud to move forward. The game is intended to give feedback on pronunciation, and this is how the user earns points.

When the pronunciation is correct the user can move forward in the game.

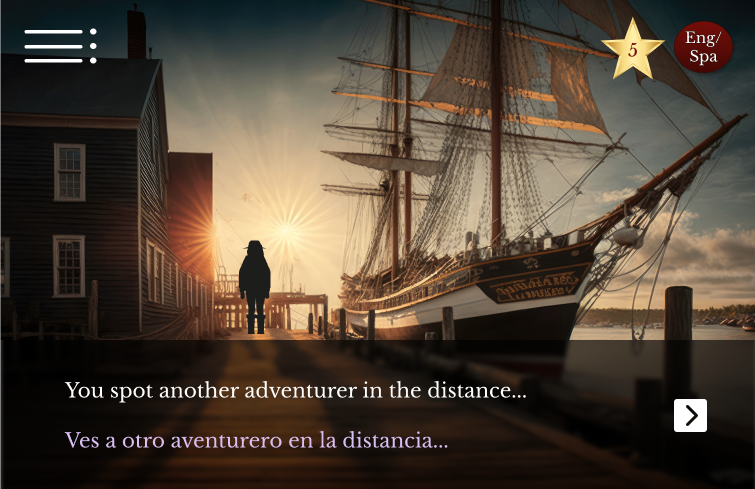

The the next scene in the story begins, and continues on with various choices the user has to make.

Users will run into characters so they can practice speaking in a conversation.

For an added challenge, the user can switch entirely to Spanish at any time.

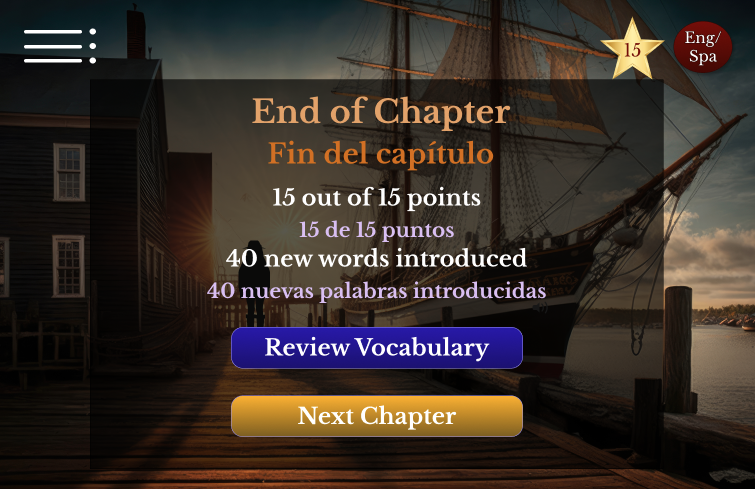

At the end of the chapter, users can review the vocabulary that they learned or move on to the next chapter.

Test

There hadn't been any user feedback up to this point, and getting feedback for a new idea like this was super important to give insight into whether this design was going in the right direction for the target audience, so I ran a small usability test with 6 potential users.

I wanted them to be familiar with AI technology and be beginner learners in Spanish.

The test was designed with the following objectives: to gauge if users could navigate the prototyped flow independently, to assess the idea's usefulness and interest, and to gather valuable suggestions for improvement.

Usability Test Details

-

What?

Remote unmoderated usability test that captured: Rate of completion, Ease of use, Rate of user interest in the concept, Rate of usefulness to the user, and functionality ideas/suggestions.

-

Who?

6 U.S.-based adults between the ages of 20-55; All have a familiarity with generative AI tools. All are beginner Spanish language learners.

-

How?

The users all played through the demo on their own and answered a series of questions.

Usability Test Results

6 out of 6 participants successfully made it through the game demo without any errors or needing assistance.

5 of the 6 participants rated the game concept as “Very Interesting” on a 1-7 rating scale.

6 out of 6 participants rated the game “Very Easy to Understand” on a 1-7 scale.

Suggestions for improvement included hovering actions over Spanish words to reveal translation and conjugations, adding Natural Language Processing (NLP) to give feedback for user pronunciation, and adding more text for actions that the game needs the user to perform, such as when to speak.

Usability Test Participant

“I love the concept of gamifying learning another language, and I appreciate how straightforward and simple it is to play the game. I could definitely see myself using this to learn a language. Well done!”

Usability Test Participant

“I think the concept is great, and the experience is also great, though I did grow up on the ‘choose your own adventure’ books and early graphic adventure video games.”

Refinement and Conclusion

Based on the results from the usability test and peer and instructor feedback, the following adjustments were made to the game design to add more clarity and benefits to the user experience.

To create the best experience possible, more iterations and testing would be necessary. Continue to the next page for reflections and conclusions.